Backwards path tracing via sampling the BSDF of the last vertex results in high noise when the light source is small, or hard to find by chance. In this post I look into using Next Event Estimation, which tries to complete all subpaths directly to a point on a light source.

Theory

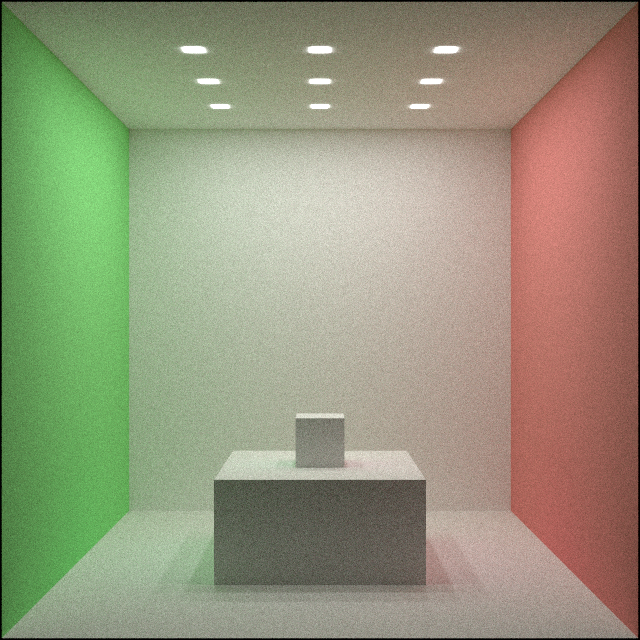

My approach so far has been to create light paths by sampling the BSDF of the material at the last vertex to get a new direction, then intersect it with the scene geometry to get the next vertex. Whenever the last ray hits an emissive surface, that emission is added to the source pixel, weighted with the transmission along the path. An illustration of this can be seen here:

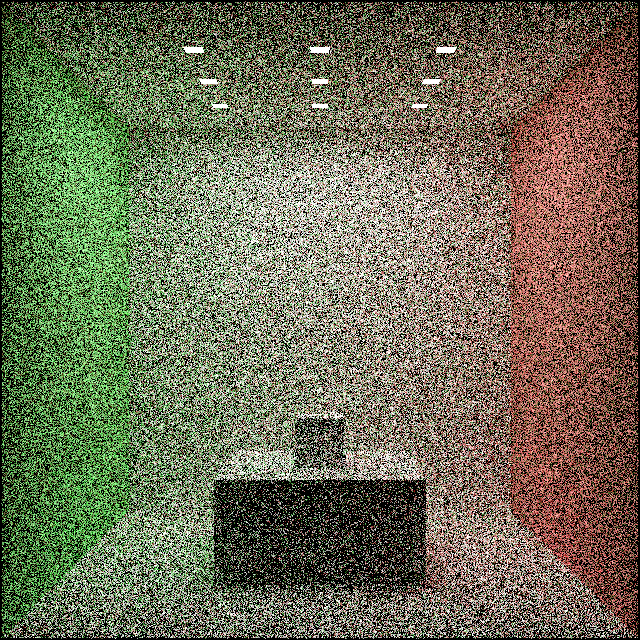

To hit a light source, the BSDF sample has to, by chance, choose a direction that points towards that light source. When the light subtends a small solid angle, i.e. the source is small, or far away, the chance of hitting the light source becomes small. This manifests as noise:

To create this image I allowed 1000 ray casts per pixel, but it is still really noisy.

Instead I am going to use a different approach, called Next Event Estimation (NEE). This basically means that a random point is generated on the light source and each vertex on the path is connected to that point. For a detailed description see these slides from TU Wien.

Sampling the same path uses twice as many ray casts with NEE, but has a way higher chance of finding small light sources.

Sampling point on lights

I will focus on meshes only, as maintaining other primitives such as squares and spheres can be emulated with them. Generating a random point on a light source can be broken down to three steps:

- Picking an object

- Choosing a triangle

- Generating a random point on the triangle

When talking about randomly selecting something, it’s always crucial to consider how that something is exactly chosen, i.e. what the probability density function (PDF) looks like. Luckily in this case regardless of the effective PDF, the result will be unbiased. This is true as long as the PDF>0 for all emissive surfaces, because the sample is later divided by the PDF, effectively implementing importance sampling.

For step 1. I am generating an Alias Table when the scene is assigned. Each object is assigned a weight and then the table is generated in $O(nlogn)$ time, using the sort-based method. From then, choosing an object can be done in O(1) using two uniform random numbers. Similarly, for step 2. I also generate an Alias Table when the object is created.

Finally for step 3. I use the Parallelogram Method (see Triangle Point Picking on MathWorld) as it is surprisingly simple and effective.

The weights are the total radiant power of the objects and triangles. This way the PDF of the light sampling will be proportional to the “amount of light” each object/triangle emits and brighter objects will get more samples.

The Alias Tables are generated on the CPU. This can later be moved to the GPU as well if deemed necessary. Similarly, random bit usage can be improved, the first random number in the Alias Method only need $\left \lceil{log_2(n)}\right \rceil$ bits.

Dealing with transparent materials

Connecting a vertex to a light point assumes light can actually travel like that. In case of a Dirac delta BSDF the probability of light taking that direction can be 0.

To deal with this I combine the two approaches: Use the direct emission sampling when hitting an emissive surface right after transparent bounces.

Since the two methods only sample paths of different lengths they are independent. That means combining them is as simple as adding their results up.

Code changes

First of all, I need to keep track of whether last ray was constrained by the BSDF of the material in sampling code:

bool ray_constrained = true;

Initially it is constrained, since it just exited the camera in a well defined direction. When a transparent material is hit, it’s assumed to not be emissive for now, so just mark the constraint flag:

if (std::get_if<TransparentMaterial>(&material)) {

ray_constrained = true;

}

However when a diffuse material is hit, I need to add the emission contribution from that point:

if (const auto *diffuse_material = std::get_if<DiffuseMaterial>(&material)) {

ray_constrained = false;

if (ray_constrained) {

color = color + diffuse_material->emission * pit->total_transmission;

}

// Code for NEE ...

}

For the Next Event Estimation first I check if the sampled point on the light surface is visible. This is done by simply casting a ray from the current vertex to the light sample point. If the cast returns a point closer to the vertex than the light sample, it is blocked and no contribution is added. Then I check if the origin of the ray is on the same side of the object as the light sample. This can be done using the normal at the vertex, as it always points towards the ray’s origin.

if (visible(pit->p, light_sample.p, objects)) {

const auto distance = (light_sample.p - pit->p).length();

const auto v = (light_sample.p - pit->p) / distance;

const auto cos_phi_x_i = v.dot(pit->n);

if (cos_phi_x_i > 0) {

// Add NEE contribution

}

}

Finally to calculate the contribution from this sample there are additional terms that need to be taken into account.

The BRDF expresses how much light is emitted in the given direction. For a diffuse material that is simply albedo over pi, $\frac{\rho}{\pi}$.

const auto brdf = diffuse_material->diffuse_reflectance / pi;

Since the light sample was chosen based on the light surface, the distance and the orientation of that surface has to be taken into account, this produces the geometric factor:

const auto cos_phi_y = v.dot(light_sample.n);

const auto geometric_term = std::abs(cos_phi_x_i * cos_phi_y) / distance / distance;

Here cos_phi_y is allowed to be negative, because I want triangles to emit light both their sides.

And lastly the light sample’s PDF needs to be taken into account to make sampling unbiased. The update is then:

if (cos_phi_x_i > 0) {

// Calculate NEE coefficients

const auto update = light_material->emission * brdf * geometric_term / light_sample.pdf * pit->total_transmission;

color = color + update;

}

Results

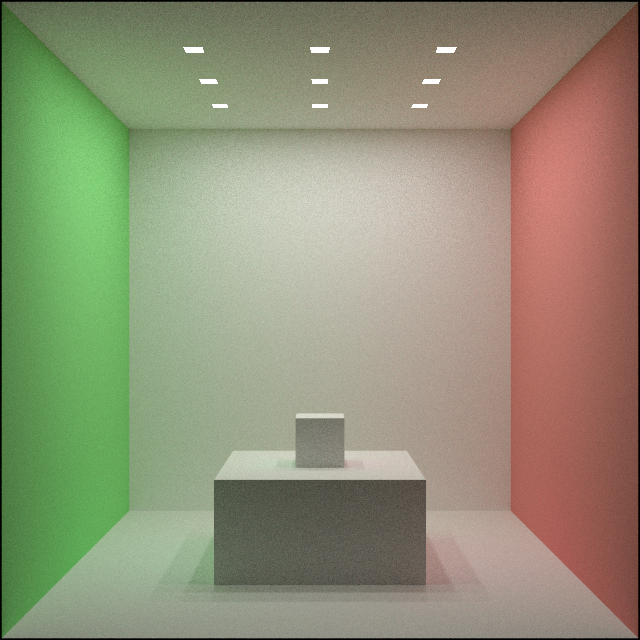

The case I showed above improved significantly. NEE samples small lights excellently.

The most compute heavy operation is ray casting and these two images were created using the same amount of ray casts. This means variance can be reduced by using those casts in a smarter way while keeping the computational cost the same.

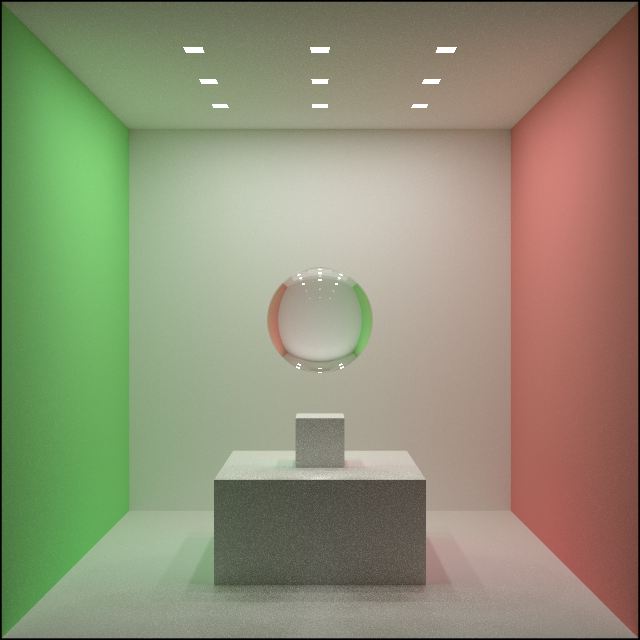

With glass materials it also works as expected. Even though the initial variance is large, the colors converge faster than with the BSDF method:

Sadly it is not a clear win, as NEE actually samples very large lights inefficiently. To demonstrate that I removed the walls and made the ceiling lamp 100 times larger and dimmer. The BSDF based method performs a lot better in that case:

Notice how in this case NEE is the noisy method and BSDF performs splendid. This intuitively makes sense, since the BSDF method has a high chance of hitting the huge light source, while NEE will often pick points far away, meaning the samples are allocated inefficiently.

If only there were a way to combine the strengths of these two methods.

Conclusion

While NEE helps a great deal with small lights, it is no silver bullet. Thanks to this endeavor I understand better how these sampling techniques work and what their weaknesses are.

Next up I will look into combining these techniques using Multiple Importance Sampling (MIS).